Outputs

Introduction

Medsea Checkpoint uses interfaces and tools to deliver the findings of the project in a stepwise approach.

| Strategic needs | Readiness |

|---|---|

| Synthesis information, to provide support for decision making on observations and monitoring, i.e. to help them to monitor gaps and prioritize needs for future development/improvement of overall observing infrastructure | Literature Survey and First Data Adequacy Reports |

| Data Browser or LS Dashboard, and catalogue discovery/downloading service to clarify the observation landscape, to browse in the region the existing monitoring data, according to different criteria, with a gateway to source catalogue/data. (The LS dashboard interface facilitates the search of the checkpoint information on input datasets used by challenges. It is a Google-like search function which allows the user to easily find and select the necessary information) |

|

| Product visualization (GIS) and specification to demonstrate the use opportunity & efficiency through discovery, viewing and downloading of checkpoint targeted products, first demonstrators of new applications, and to allow end-users to seek for input data for their own applications. | Products are delivered through its challenge web page |

| Assessment results based on seven challenges applications and final Data Adequacy Report |

|

The practical outputs of the project were:

- A literature survey summarizing the monitoring characteristics of the system

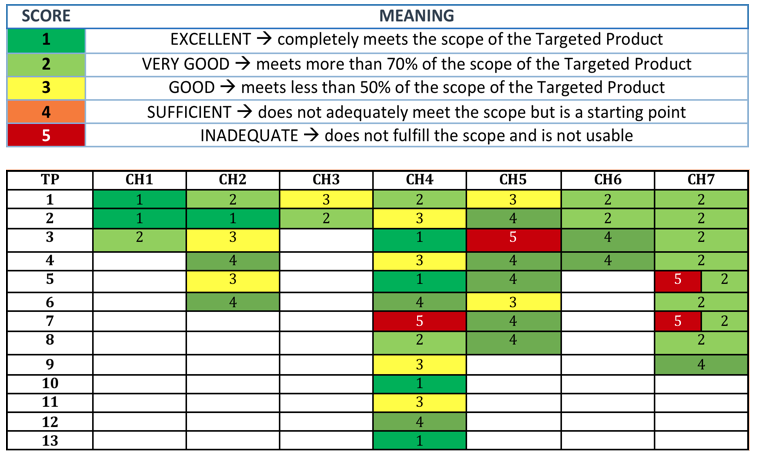

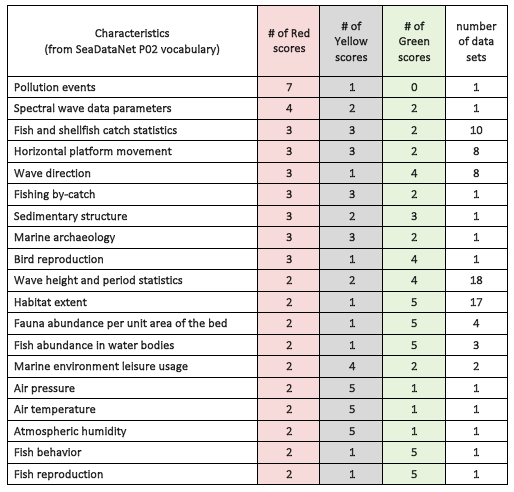

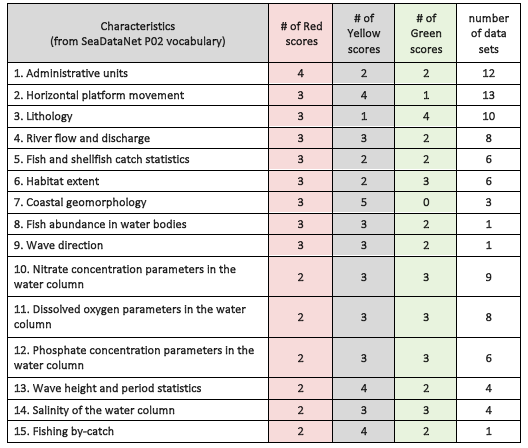

- Two Data Adequacy Reports (DARs) to provide an overview of how fit for purpose the monitoring effort is, in view of the challenge of product development

- Two expert panel reports

- A final report indicating how the EMODnet Med-Sea checkpoint portal could operate once the project has finished

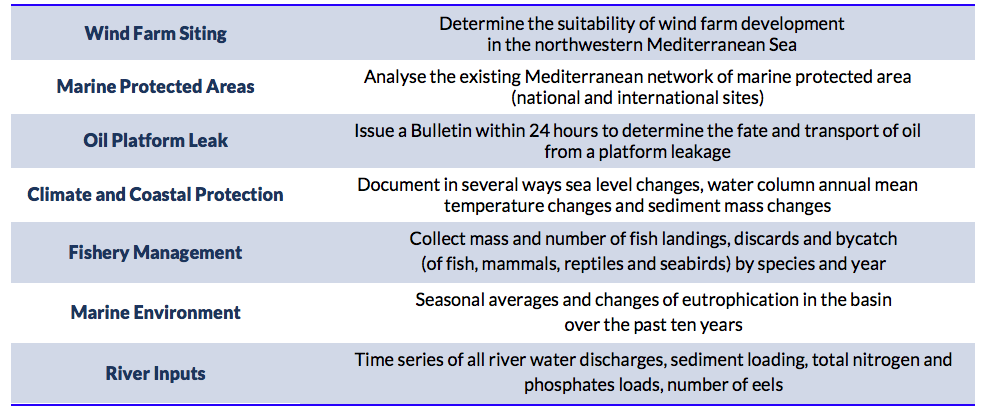

Specific products from available primary and assembled datasets for each challenge in synthesis:

Final Assessment

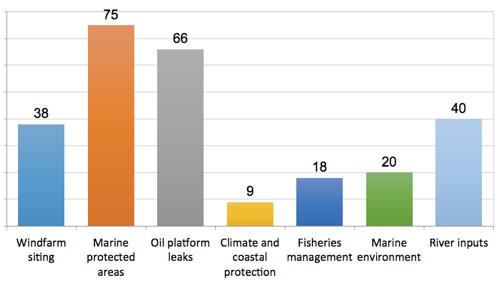

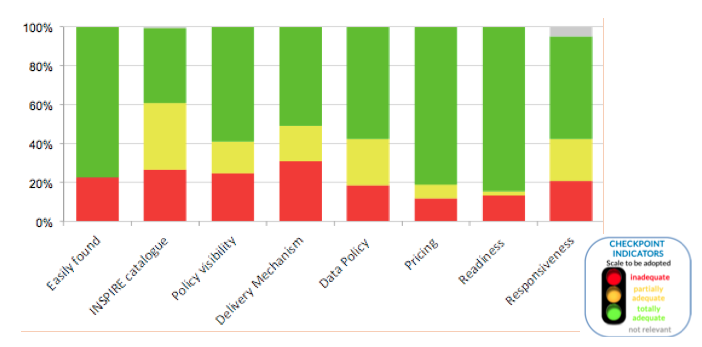

The EMODnet MedSea Checkpoint reached its end and released the 2nd Data Adequacy Report. An ISO inspired objective methodology for monitoring assessment had been developed and finally implemented, which included availability and appropriateness indicators. The assessment results based on seven challenges applications highlighted the main gaps of the monitoring system at the basin scale and suggested some priority actions in order to optimise the monitoring and observational landscape in the Mediterranean Sea region and foster the Blue Economy.

|

|

|